Notes on LLM RecSys Product – Edition 5 of a newsletter focused on building LLM powered products.

Four posts into this series on how LLMs are changing product building, we’ve spent all our time on what we build. We’ve talked about why I think LLM-powered recommender systems are going to be the core primitive of product building going forward, dug into the power of teacher models, building eval loops, and writing product policy. The next stops are exploring how and who. But before we do, it is worth pausing.

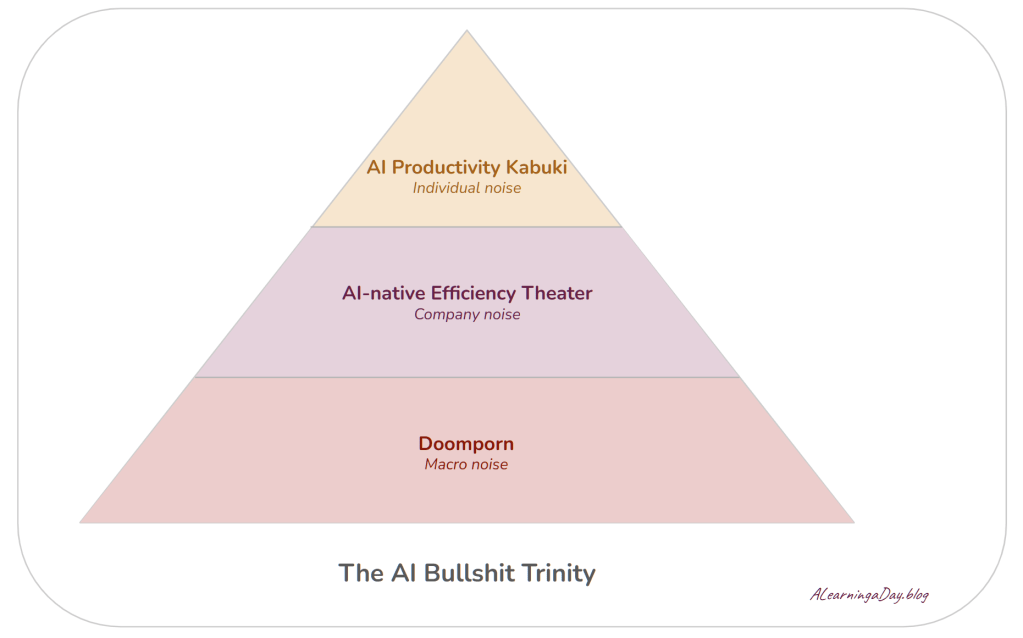

There is an enormous amount of noise around AI right now. And that noise is the AI bullshit trinity that we should talk through first — Doomporn, AI-native efficiency theater, and AI productivity kabuki.

Doomporn

Doomporn is the successor to hustleporn from a few years ago. We can’t seem to get enough of content that tells us that there is going to be a job apocalypse. We should save up and get a bunker now.

Why this is happening

The biggest purveyors of this narrative are the big foundational model labs who have every incentive to keep the volume on these turned up (until the negative impact on their brand becomes the dominant factor at least). Trillion-dollar IPO outcomes require investors to believe the addressable market is all of human labor. In effect, the most apocalyptic framing of AI’s capability is also the most lucrative one. Everyone talks their book.

This isn’t to say the existential concern is fake. Every powerful technology has a dark side, and this one is particularly powerful. The risks are non-zero and worth being thoughtful about. But, the trillions of dollars at stake right now muddy this narrative.

What is likely not true

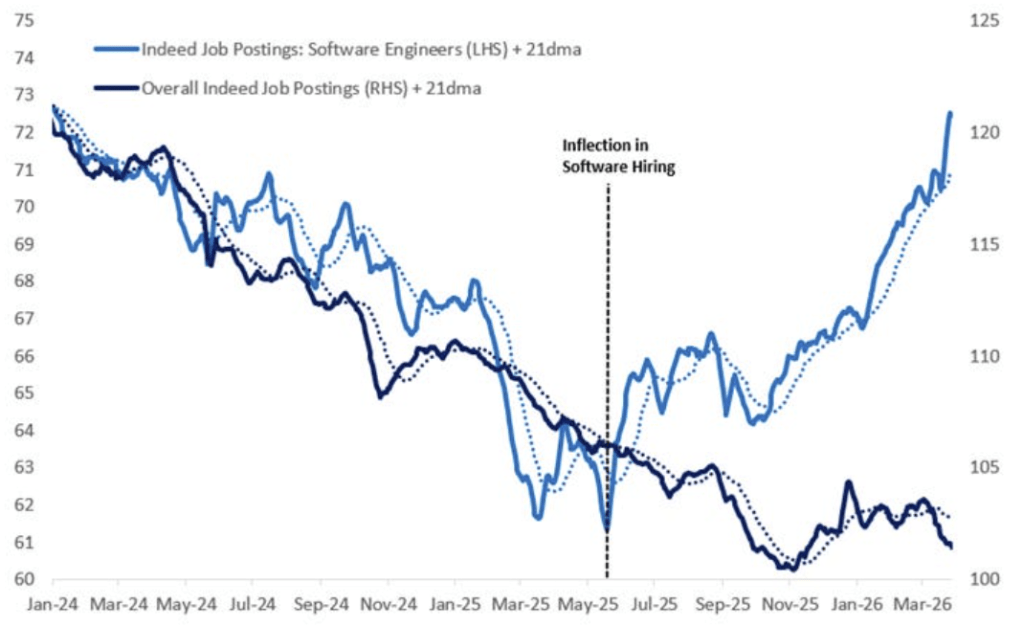

That anyone knows the second and third order effects. Labor markets are complex. Here’s an example of an inflection in software engineering hiring on Indeed. It might be Jevons paradox (making something cheaper results in more usage of it) or it might be something else.

Either way, anyone speaking with certainty is either selling you something or performing certainty for an audience.

What looks to be true and useful

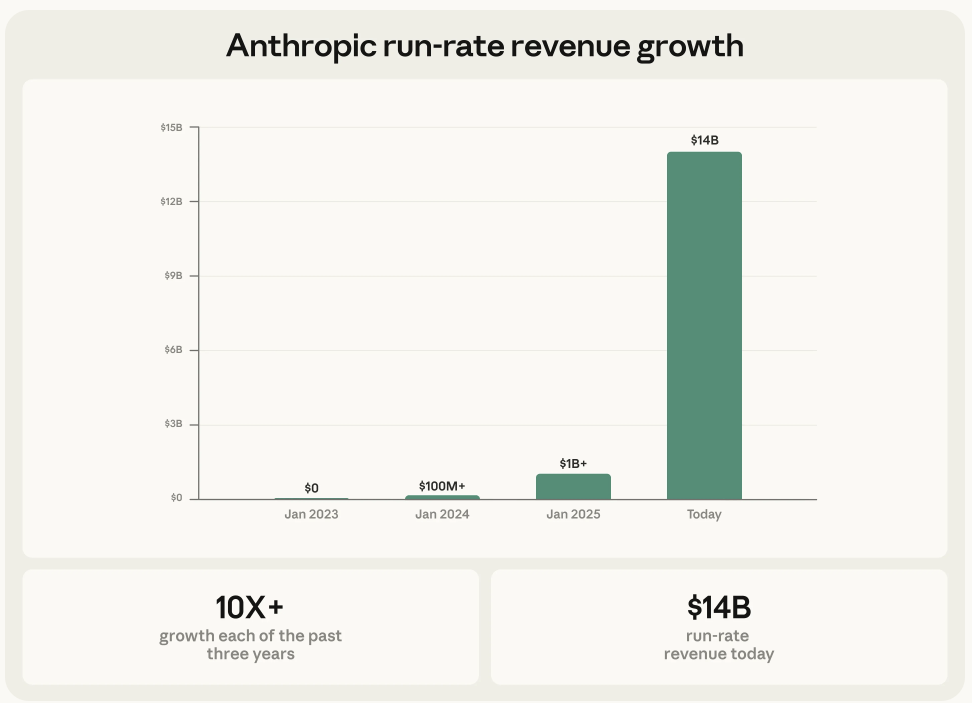

Agentic coding has real product-market fit. The bottleneck for builders has shifted — it used to be how to build, it is increasingly becoming what to build. That’s a genuine change and it shows up in Anthropic’s once-in-a-generation growth rates.

The bull case that few talk about amidst all this noise is that we could see more small teams, at terminal size, growing revenue per employee for years. The days of 70% software margins at venture scale may be done. But that doesn’t mean great businesses won’t get built — it means they’ll get built differently. Smaller groups, less coordination overhead, a longer tail of viable companies and cultures to choose from.

Work might actually become a happier place for many. But nobody is selling us this version because there’s no trillion dollar IPO attached to it.

AI-native efficiency theater

Every company wants you to know they are “AI-native.” This layer of bullshit is a broad swathe of companies playing for hundreds of millions of dollars in valuation upside.

Why this is happening

The valuation pull on being “AI-native” is enormous. Revenue per employee is the new metric Wall Street cares about. Growing the numerator is hard, but shrinking the denominator is relatively easier. And AI gives perfect cover for layoffs that were always coming after the boom in hiring during the zero interest rate era.

This creates a catch-22 inside large companies. Leadership wants the workforce to adopt AI. The workforce understands that adoption accelerates their own redundancy. Adoption stays halfhearted. Layoffs happen anyway.

What is likely not true

That most organizations have actually cracked it. Building good products is still hard. The old hard things got easier, but the bar moves with the capability.

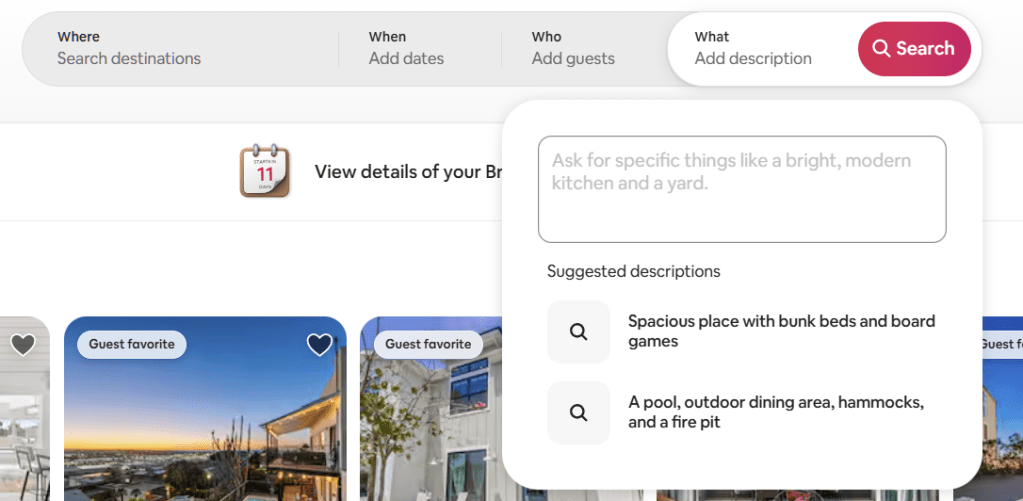

I used to love Airbnb search. Then it felt limiting. Then when we finally got natural language search, it got an “about time” reaction. Expectations go up as execution capabilities go up.

Anthropic’s product velocity is incredible to watch. That said, their consumer app is still incredibly buggy. If the company executing at the absolute frontier with models that can seemingly fix everything hasn’t cracked the consumer experience, it’s a sign that it is safe to be skeptical of everyone claiming they have.

This isn’t a big-company problem for what it’s worth. AI-native startups held up as the future have eye-popping valuations, breathless coverage, and impressive technology — but they also have business models that are still unproven and moats that are still unclear.

People come for the magic, but they stay for the math. The math reckoning hasn’t come yet. But it will. All in all, if it smells like bullshit, trust your instincts.

What looks to be true and useful

Individual empowerment is real. Every large organization I know has seen pockets of genuine transformation. The challenge now is creating systems and environments that can scale what’s happening in these pockets.

While this is disproportionately harder for large companies, the reality is that it is still very hard in small companies too (even if there is a real “clean slate” advantage). Building agentic organizations is a new craft, and learning a new craft takes time.

AI productivity kabuki

Kabuki is a form of classical Japanese theater. Highly stylized and heavily made up with dramatic gestures performed over and over for centuries — the audience knows exactly what move is coming next, and that’s part of the appeal.

The daily AI content cycle is the same thing. You see the same predictable cycle: analysis of the latest model release, the AI agent workflow, the many Open-Claude agents running out of Mac Minis, the new killer use case.

Why this is happening

The incentives are smaller than the trillion-dollar IPO or hundred-million-dollar valuation pulls above — but no less real. Career progress and content impressions add up. And the noise itself creates a permanent feeling of being behind, which makes the content even more compelling to produce and consume. It’s a loop.

What is likely not true

That staying on top of every new tool is the same as getting better at building. Or that the person who knows the most tools is the best builder. Or that using AI to do something faster means it was worth doing.

Using AI to summarize 16 newsletters you never read isn’t productivity. Running an agent to manage an overscheduled kid’s calendar isn’t a solution — it’s automation of a problem you created. The worst thing we can do is get really efficient at something that shouldn’t be done at all.

What looks to be true and useful

Spend time with Claude Code/Codex. Understanding what agentic coding actually unlocks will go a long way in designing systems at work that make the best of what these tools enable.

Real change is going to flow from systems change. And our ability to design systems follows our ability to understand what can be possible.

Three takeaways

1. Name it to neutralize it. The reason we just spent a post deconstructing all three is simple: when you realize everybody is talking their book, it becomes a lot easier to ignore the noise and focus on what’s real.

2. There’s plenty that’s real underneath. We have access to power tools today, and it is still very early. We’re still figuring out how to use them — as individuals, as teams, as systems, as companies. Building that craft is going to take time, and that’s where the real opportunity is.

3. Play offense. A lot of people are stuck right now because the noise has them in a defensive crouch. It is tempting to keep worrying about how we’ll become obsolete. Don’t. Use this time to play offense — to think through how to become more effective. And effective doesn’t mean automating things you shouldn’t have been doing anyway. It means getting better at the skills that actually matter, and then designing systems that scale that ability to more people.

Our next stop will be to work through the skills that matter as we build product today.